Anthropic Defies Pentagon Ultimatum, Refuses to Remove AI Safety Guardrails

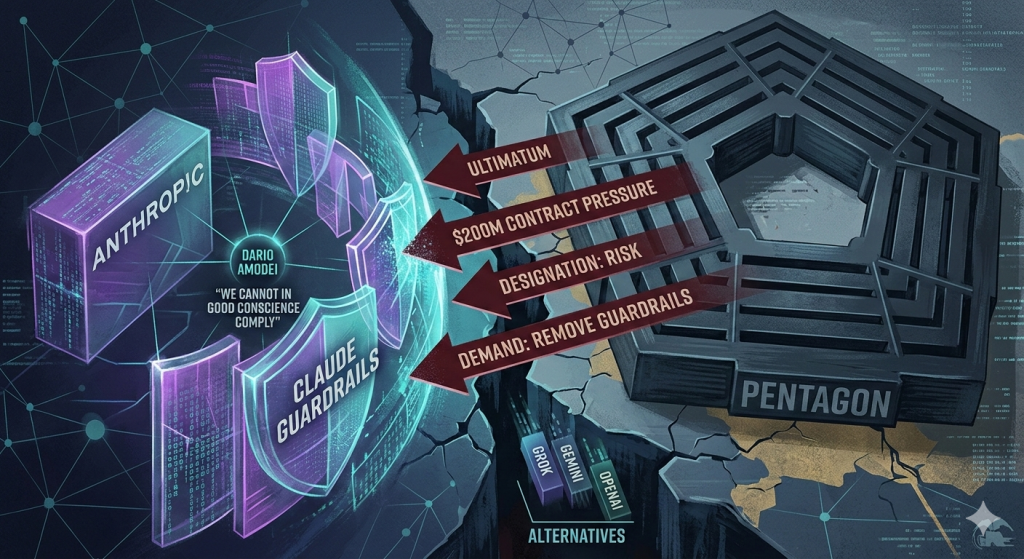

In a dramatic standoff that could reshape how AI companies engage with the US military, Anthropic CEO Dario Amodei has publicly refused to strip safety restrictions from Claude — even after Defence Secretary Pete Hegseth threatened to cancel a $200 million contract and brand the company a national security risk.

The confrontation escalated when the Department of Defense demanded unrestricted access to Claude for what it called “all lawful purposes” — a category that, according to Anthropic, explicitly included mass surveillance capabilities and fully autonomous lethal weapons systems capable of killing without human authorization. Anthropic drew a hard line.

“We cannot in good conscience comply,” Amodei wrote in a blog post that quickly rippled across the tech industry. “Our strong preference is to continue to serve the Department and our warfighters — with our two requested safeguards in place.”

The Pentagon’s response was swift and sharp. US Under Secretary of Defense Emil Michael fired back on X, accusing Amodei of wanting “nothing more than to try to personally control the US military” and claiming the CEO was “OK putting our nation’s safety at risk.” The rhetoric was unusually pointed for a dispute between Washington and a Silicon Valley contractor.

Hegseth reportedly gave Anthropic a deadline of 5:01 PM last Friday to capitulate. When that hour passed without agreement, the DoD began quietly assessing its operational dependence on Claude — an early procedural step toward designating Anthropic as a “supply chain risk.” That designation carries enormous weight: it has historically been reserved for firms linked to adversary nations like China, and according to Anthropic, has never been applied to an American company.

The irony is that disentangling the Pentagon from Claude may prove harder than the DoD wants to admit. Until now, Claude has been the only AI model cleared for the military’s most sensitive workloads — intelligence analysis, weapons development, and battlefield operations. Reports have even linked Claude to the operation in which the US military exfiltrated Venezuelan president Nicolás Maduro and his wife from the country.

Should Anthropic be removed, the DoD is said to be weighing alternatives including Elon Musk’s Grok, Google’s Gemini, and OpenAI’s models — none of which have yet been certified for the same level of classified use.

Amodei offered a measured olive branch even in defiance, pledging to “work to enable a smooth transition to another provider” to avoid disruption to ongoing military missions if the Pentagon chose to move on. But the broader implications of his stance are significant.

This confrontation arrives at a particularly uncomfortable moment for Anthropic. The company has long staked its identity on being the most safety-conscious frontier AI lab — a claim that came under scrutiny just days ago when it quietly dropped its flagship safety pledge. Critics had already been watching to see whether Anthropic would hold the line when commercial pressure became real. Now, facing a $200 million threat and a potential government blacklisting, Amodei has delivered his answer.

The next move belongs to the Pentagon. Whether it follows through on its threats — and what that means for the broader question of AI companies setting limits on how their technology is weaponised — will be one of the most consequential tests yet of who ultimately controls the guardrails on advanced AI.